Aaware is developing a new voice development platform based on the Zynq Ultrascale+ MPSoC device and will feature Infineon’s XENSIV Digital MEMS microphone. More details on the full capabilities of this new platform will be revealed soon. Under the terms of this partnership Infineon will supply the most sensitive digital MEMS mics that will be designed into a microphone array in the new platform.

“The formation of this partnership starts with a collaboration to bring commercial and industrial applications a superior sound capture capability, including extended support for embedded neural networks and can evolve to bring additional acoustic intelligence to our joint customers,” says Joe Gianelli, CEO of Aaware.

“We are impressed with Aaware’s voice capture technology and complete single chip SoC implementation for noise, echo, reverb-cancellation and wake word, and we are intrigued with their vision for advanced sound capture and neural network support," says Roberto Condorelli, RFS Marketing Manager of Infineon Technologies AG.

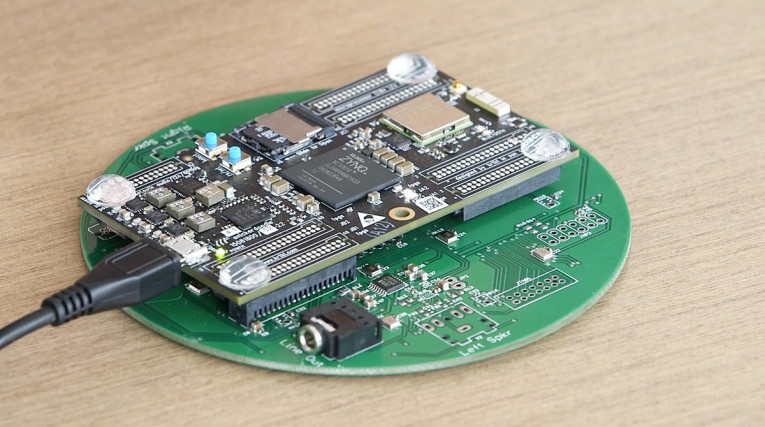

Aaware impressed at CES 2018 with its demonstrations of multi-wake word voice-recognition in noisy environments, using its Sound Capture Platform, leveraging Avnet’s Xilinx dual-core SoC DSP platform, with a scalable microphone array board featuring 13 microphones, and combining all the resources needed for voice interface development. Aaware’s Acoustically Aware sound capture algorithms, paired with TrulyHandsfree wake word detection technology from Sensory, allow systems to adapt to differing noise interference, without requiring calibration for different environments or integration of the reference signal.

Unlike standard sound capture platforms which typically offer only fixed microphone array configurations, the Aaware platform has a concentric circular array of 13 microphones that may be configured in different combinations, allowing for product performance tuning. The Aaware platform separates source speech (wake word) and follow-on speech from interfering noise with ultra-low distortion, allowing the system to more easily integrate with third-party speech and natural language engines. In addition, source localization data can be forwarded to downstream applications, such as video, improving the performance of sophisticated multi-sensor AI applications, including those for industrial robotics and surveillance.

www.infineon.com | www.aaware.com